Not-knowing discussion #11: A mindset for not-knowing (summary)

14/12/2023 ☼ not-knowing ☼ ii ☼ iisummary

This is a summary of the eleventh session in the InterIntellect series on not-knowing, which happened on 16 November 2023, 2000-2200 CET.

Upcoming: “Broad approaches,” 21 December 2023, 1700-1900 CET (note the change from the usual time). Episode 12 of my Interintellect series on not-knowing is about broad approaches to action informed by the mindset of not-knowing. We’ll discuss four approaches: Thinking in small experiments (and avoiding “bets,” big or otherwise); experimentation designed for exploring specific types of not-knowing; deck-stacking; and goal superordination. First-timers welcomed with enthusiasm. More details and tickets here. As usual, get in touch if you want to come but the $15 ticket price isn’t doable — I can sort you out. And here are some backgrounders on not-knowing from previous episodes.

A mindset for not-knowing

Reading: A mindset for not-knowing.

Participants: Razan B., Susannah F., Blanka H., Trey L., Kaleigh M., Indy N.

The words we use to describe not-knowing matter, because words shape how we think and frame what we pay attention to. Because of this, the foundation of a toolkit for relating productively to not-knowing is a mindset which explicitly acknowledges the existence of different types of not-knowing.

In this context, a “mindset” is a set of assumptions which shape what we perceive about the situation, how we interpret what it means, and how we decide to act on it.[^1]

Discussion highlights

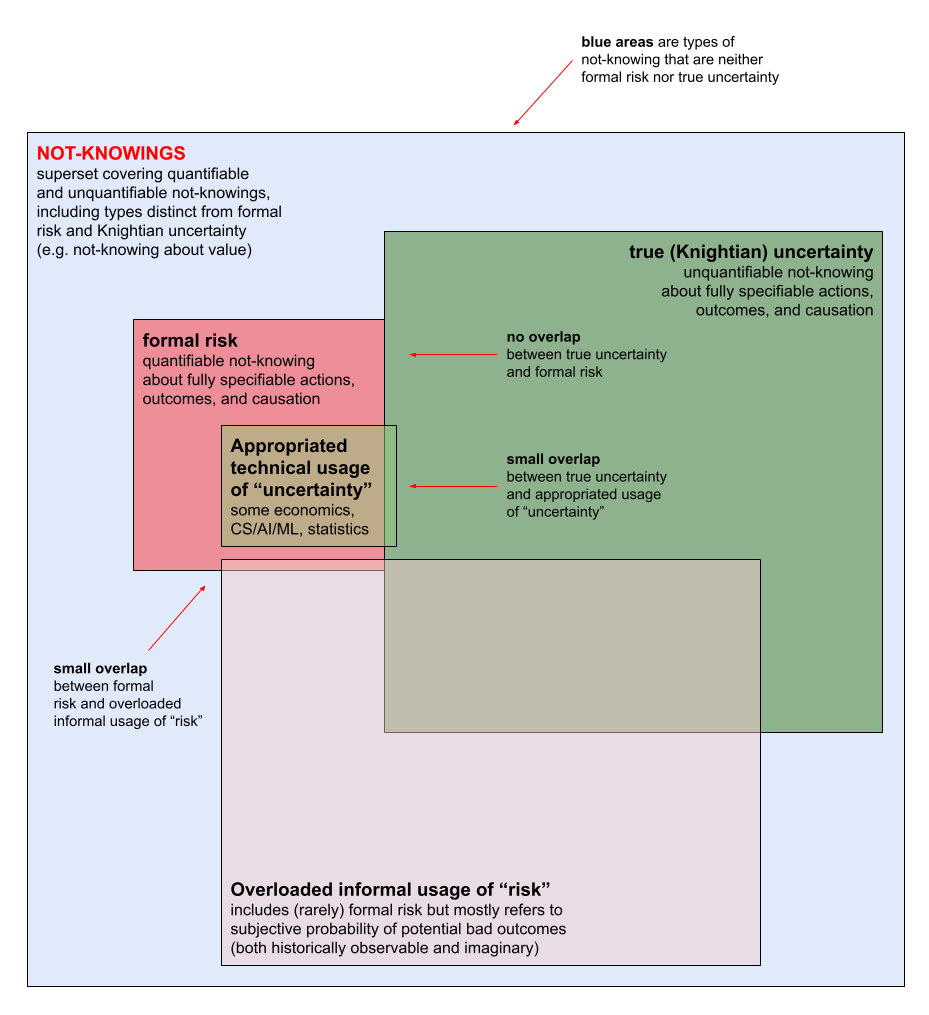

- I use the phrase “not-knowing” to refer to all types of partial knowledge, and qualify the type of not-knowing. Risk is one type of not-knowing, as is not-knowing about possible actions and outcomes, causation, and valuation. The diagram below illustrates the confusing relationship between these types of not-knowing and commonplace words like “risk” and “uncertainty.”

- Simply put, a mindset for not-knowing consists of explicitly recognising the difference between risk and non-risk types of not-knowing. Risk and true uncertainty are different types of not-knowing, and true uncertainty comprises not-knowing about actions, outcomes, causation, and relative value.

- A mindset for not-knowing is especially important in some settings. These may be good settings to focus on when articulating a language for describing a mindset for not-knowing and developing toolkits for relating to not-knowing:

- Medical care and patient communication. Diagnosing and treating health problems combines both risk and true uncertainty (because the human body is a complex and incompletely understood system). Treatment outcomes are often more uncertain the more severe or existential the health problem being treated. For patients, managing care well depends on recognising uncertainty for what it is and not mistaking it for risk — this prevents undue optimism/pessimism about outcomes, reduces wishful thinking/cruel optimism, encourages living in the moment, and increases compliance with treatment. This means care providers must communicate uncertainty and risk accurately to patients and their families as well.

- AI/ML development and deployment. Limited data learning, model bias, appropriate deployment contexts, and other vital technical and sociotechnical AI problems depend on distinguishing between appropriately quantifiable partial knowledge (risk) and partial knowledge that cannot be quantified accurately (true uncertainty). This is why the current conflation of uncertainty with risk in the AI/ML space can (and has) impeded model development, led to AI systems that produce hard-to-interpret outputs or are biased, and made it hard to figure out what types of human work AI systems can replace and should be designed to replace. We also discussed AI/ML’s uncertainty problem in a previous discussion, and an interesting open question came up about whether different types of not-knowing can be symbolically representable using non-numeric systems.

- Business, especially in rapidly changing industries or markets. Nearly every really important and difficult problem businesses face is one of true uncertainty, not risk. Examples include figuring out how to create new products to stay relevant in the face of new competitors, dealing with complex and unpredictable geopolitical contexts (e.g. if operating in Russia when the war with Ukraine broke out), or responding to unexpected regulation affecting business practices. Poor decisionmaking (sometimes with devastating business consequences) results from misidentifying uncertain situations as risky, or from falsely believing that uncertainty has been eliminated through risk-management processes.

- Wicked problems. Wicked problems are difficult to solve because they are inherently uncertain (they cannot be definitively formulated, criteria for successful solutions aren’t clear/stable, causal system not exhaustively described, etc) — a clearer understanding of uncertainty might make them more tractable.

- Translation. Humans are meaning-making entities, and languages are symbolic systems for representing meaning with necessarily imperfect fidelity (because not all knowledge can be made fully explicit). Translation is an exercise in subjective meaning-making in that translation must navigate the uncertainty that arises from slippage and mismatch of nuance in how two symbolic systems represent meaning. Understanding uncertainty better (in particular the strategic use of ambiguity) enables translations that capture nuances of original meaning better than statistical translations or even literal translations. For an object lesson in how awareness of uncertainty enables subjectively better translation, see this pair of translations of the same poem by Joseph Brodsky.

- Medical care and patient communication. Diagnosing and treating health problems combines both risk and true uncertainty (because the human body is a complex and incompletely understood system). Treatment outcomes are often more uncertain the more severe or existential the health problem being treated. For patients, managing care well depends on recognising uncertainty for what it is and not mistaking it for risk — this prevents undue optimism/pessimism about outcomes, reduces wishful thinking/cruel optimism, encourages living in the moment, and increases compliance with treatment. This means care providers must communicate uncertainty and risk accurately to patients and their families as well.

- A mindset appropriate to not-knowing is generally useful even outside the particular settings above.

- The ability to discriminate between risk and non-risk types of not-knowing allows better strategy and tactics in professional, personal, and interpersonal life — it therefore seems plausibly universally useful.

- See, for instance, how understanding not-knowing contributes to individual happiness, or the relationship between innovation and not-knowing (which often has business implications).

- Unfortunately, many interrelated obstacles lie in the way of developing a mindset for not-knowing. This is true both in the sense of collectively figuring out the concrete details of such a mindset and individually acquiring such a mindset.

- Thinking about different types of not-knowing is conceptually harder than thinking only about risk. Thinking about risk involves only an execution challenge (to oversimplify: “doing the math” on expectations), while thinking about not-knowing generally is both an execution and an epistemic challenge.

- Weak demand for a mindset for not-knowing. This can be due to a delusional worldview (failure or inability to diagnose uncertain situations correctly) and/or fear of uncertainty. Fear of uncertainty conditions how we think about uncertainty because of the link between affect and cognition, and makes situations of not-knowing feel oppressively hard to deal with.

- Strong demand for ostensibly robust methods for dealing with the unknown. These are ostensibly robust because they are mistakenly believed to be adequate substitutes for a mindset for not-knowing. They nearly always are risk-based frames for interpreting the world, many of which have reached high levels of technical refinement. Demand for these crowds out the already weak demand for the real thing.

- Confused terminology about uncertainty and risk. Defining terms clearly is the most basic, difficult, and important things to do when figuring out or learning something new, but it isn’t fun so we tend to skip it. In addition to being un-fun, various parties also have cynical reasons for calling risky situations “uncertain” or calling uncertain situations “risky“ .The resulting terminological confusion impedes clarity and understanding.

- Relevant learning feedback loops are rarely available and/or slow and/or hard to attribute. Learning happens through repeated exposure to causally attributable feedback loops between actions and outcomes. A mindset for not-knowing develops through repeated exposure to not-knowing and observing the results of acting differently on it. Such loops are relatively rarely accessible because people avoid not-knowing. When they are accessible, there is often a long interval between taking an action in a situation of true non-risk not-knowing and seeing its results. It is also often difficult to connect particular actions taken with particular outcomes.

- Powerful social obstacles prevent expressions of epistemic uncertainty. We valorise confidence socially, but don’t distinguish between social and epistemic confidence. This makes it hard to express epistemic uncertainty because doing so diminishes perceived confidence. More simply put, there is intense pressure in all social settings (both professional and nonprofessional) to always know the answer. This is likely connected to pedagogical practices that are focused on teaching students how to find “right” answers instead of helping them learn how to deal with situations where “right” are not yet (and may never be) available — a participant pointed out that “not knowing evokes the possibility of a multiplicity of potential answers where even what counts as ‘right’ is uncertain and unstable.”

- But there may be reasons to be optimistic about the possibility of developing a mindset for not-knowing.

- The epistemic shift needed seems feasible, though difficult. Other difficult epistemic shifts took a long time to happen, and entire scholarly and practical communities had to form before the shifts happened. Two examples are i) the formal and practical distinction between causation and correlation, and ii) the replacement of kin selection with group selection in evolutionary biology.

- The framing of generative or productive uncertainty seems more compelling. This framing is already widespread in creative and artistic settings, and in small-scale regenerative agricultural practice.

- Tools already exist that help develop a mindset for not-knowing in particular settings. They all need improvement, but at least there is a foundation to build on. Examples include i) medical research on how to communicate uncertain prognoses to patients and on using hope theory in communicating uncertain prognoses, ii) uncertainty-accommodating practices in regenerative agriculture, iii) existing religious concepts and philosophical practices for building capacity to relate to uncertainty, and iv) a tool for enriching access to not-knowing learning loops.

Fragmentary ideas/questions that came up which seem valuable

- Is it feasible to develop symbolic representation systems for different types of non-risk not-knowing? And — this would be much harder but much more valuable — is it feasible to develop such systems with computational approaches so that symbolic representations of non-risk not-knowing could be computable in conjunction with symbolic representations of true risk (i.e., with numerically expressed probabilities)? I wrote a bit more about this in the context of several important AI research and deployment questions.

Some links shared by participants

- Boys in White, an ethnography of medical training (by Howie Becker).

- The Cunning Man, a book about — among other things — a remarkable diagnostician who uses not-knowing in his practice (by Robertson Davies)

- A paper about the limits of expert knowledge in public policy decisionmaking, including a discussion of the limits of qualitative descriptors of uncertainty (by M. Granger Morgan).

- A paper recommending the use of numeric representation of uncertainty for intelligence work(by Sherman Kent).

- A paper about the intelligence community’s numeromania in relation to uncertainty (by Nate Kreuter).