Overloading and appropriation

9/6/2023 ☼ not-knowing

Clearing the ground

So far, a huge amount of trying to wrap my head around not-knowing has been working on clearing the ground. By this I mean working out how we use words like “risk” or “uncertainty.” Turns out there are are least two types of inaccuracy in usage:

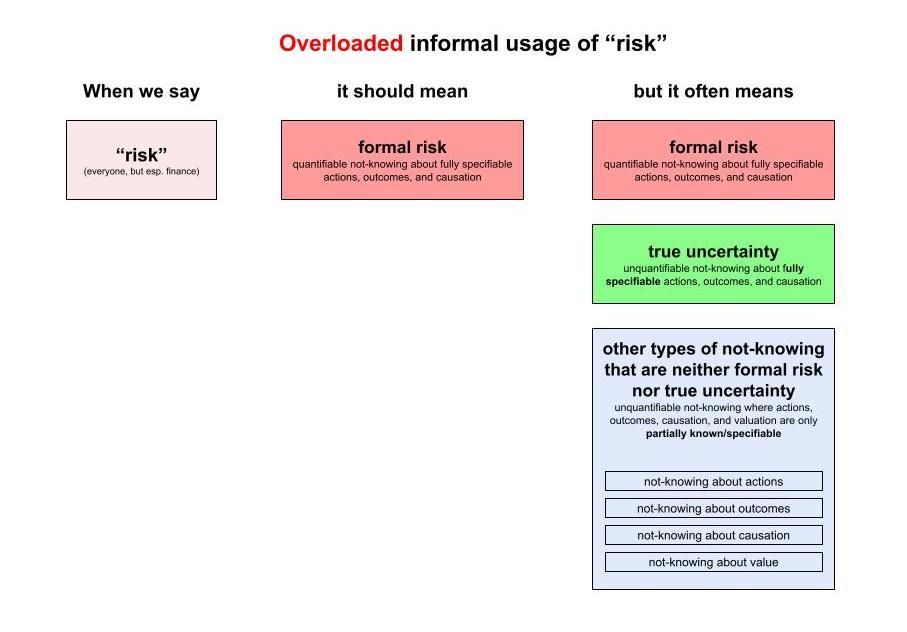

- Overloading “risk”: Using “risk” to refer to many different situations of not-knowing, nearly all of which are not formal risk.

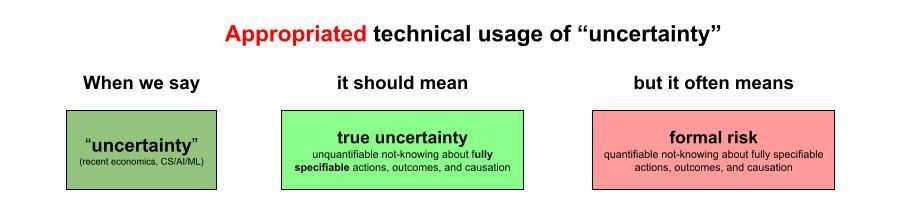

- Appropriating “uncertainty”: Using “uncertainty” to imply a reference to Knightian uncertainty but actually referring to formal risk.1

Clearing the ground is important because

[What we call something] → [What we think it is] → [How we choose to act on it] → [Outcomes].

Overloading “risk”

I’m using overloading in the same sense as in function overloading in software development, to mean a situation where different functions are all given the same name. This is what happens with the word “risk” in informal use. Nearly every real-world situation we call “risky” is actually a situation which isn’t formally risky. Instead it is a situation of non-risk not-knowing where formal risk conditions don’t hold.

When we call a situation “risky,“ we act on the situation using methods that only work well when the conditions of formal risk apply — situations such as betting on the outcome of tossing a fair coin or throwing fair dice. (“Formal risk methods” are things like expected value analysis, cost-benefit analysis, etc.) When formal risk conditions don’t hold, formal risk methods for deciding how to act in the situation also don’t work. This causes problems big and small, including mismanaging COVID-19 in the pandemic’s early days or the 2008 Global Financial Crisis.

For a lot more detail on how we overload “risk,” how easily formal risk methods sneak into our thinking about “risky” situations, and why this is a problem, check out this essay on how to think more clearly about risk, this essay on the insidiousness of the formal risk methods, and the summary of the fourth discussion session on not-knowing.

Overloading “risk” means using “risk” (a word which should have a narrow meaning of “formal risk”) to also describe many situations of not-knowing that are not formally risky.

Appropriating “uncertainty”

Important note: This is a work in progress. My criticisms of methods, conclusions, and syntheses below are provisional. I especially want to make this section better with the help of comments — so tell me where I’m wrong or need to be clearer!

For now I’m calling the second form of inaccuracy appropriated “uncertainty.” This essay is about appropriation of this particular sort.

“Appropriation” here means using the word “uncertainty” in a way that does violence to its meaning as unquantifiable not-knowing about a situation (i.e., Knight’s definition). Knight’s definition for “uncertainty” should prevail here because his definition is completely non-overlapping with the definition for “formal risk” — and non-overlapping definitions help get us to clarity.

For Knight, true uncertainty is not-knowing that is “not susceptible to measurement and hence to elimination.” True uncertainty is defined by the unquantifiability and unmanageability of not-knowing (as opposed to risk, which is not-knowing which can be quantified and thus managed and eliminated).

The appropriative strategy seems to be to use “uncertainty” to describe a form of not-knowing that can be quantified and thus can be made controllable. An example from machine learning might illustrate what I mean by this.

Appropriating “uncertainty” means using “uncertainty” to mean many things that all imply aspects of true uncertainty, but which resolve, when you look closely at how those terms are defined either explicitly or implicitly in use, to forms of not-knowing that are so restricted that they essentially constitute formal risk.

🙏 Diana Kudayarova and Tse Wei Lim for helping me figure out that these two words are appropriate in the specific contexts where they’re used here.↩︎